Visual Context Persistence in ChatGPT Images 2.0

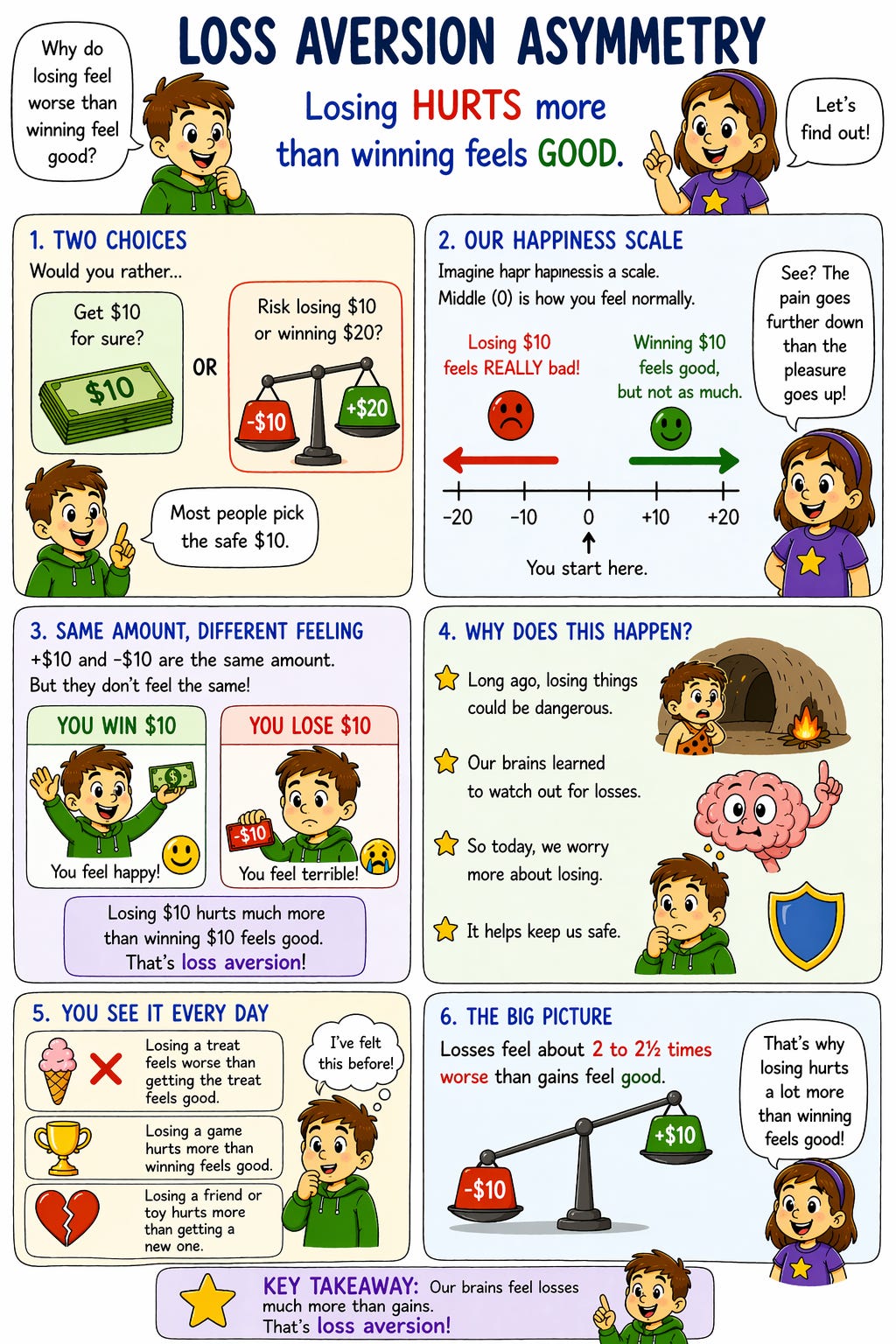

Introducing Milo and Luna, the Ghosts of Context Persistence Present

In a previous post, I explored ChatGPT’s Images 2.0 in the context of its much-observed improvements in in-image text generation, using a simple parametrized prompt format of:

Can you create a cartoon illustration to help explain <complex concept> to a(n) <number>-year-old?

When we did the original experiment, we used a common number of “eleven” to keep the <complex concept> as the single parameter. But importantly: all examples were generated in the same ChatGPT session.

From this we noticed three elements which made visual in an interesting way the concept of context persistence, where conversations earlier in a single chat session can carry forward and pollute

Ever have that experience in Claude Code where the same bug gets fixed repeatedly and each time is reintroduced later? If you aren’t regularly resetting your session, then that’s likely context persistence.

Background

When we started this experiment, the early examples generated were damn-near perfect (see Schrodinger’s Cat, Banach Tarski Paradox, etc. from previous article). Each of those examples had a visual reference that could be extracted from the complex concept, to serve as a visual navigator through the walkthrough.

At the first instance that lacked that extractable visual reference, the presenters became generic characters that matched the age of the target audience. And then they never left, even for concepts that had possible visual references.

Our working theory of how this happens:

At the first concept without a visual reference in it, the model took the target age of the audience as the target character;

The model then carried that forward into the next concept without a visual reference;

The model then used the memory of the session took over to reinforce the characters.

We can test this by repeating the experiment, keeping the complex concept fixed and varying the audience age: so from

Can you create a cartoon illustration to help explain <complex concept> to an eleven-year-old?

to

Can you create a cartoon illustration to help explain Loss Aversion Symmetry to a(n) <integer>-year-old?

The “Loss Aversion Symmetry” selection could be anything, but something that is plausibly explainable to a range of younger audiences gives more flexibility of control over the generated text.

Counting down

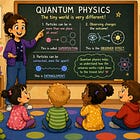

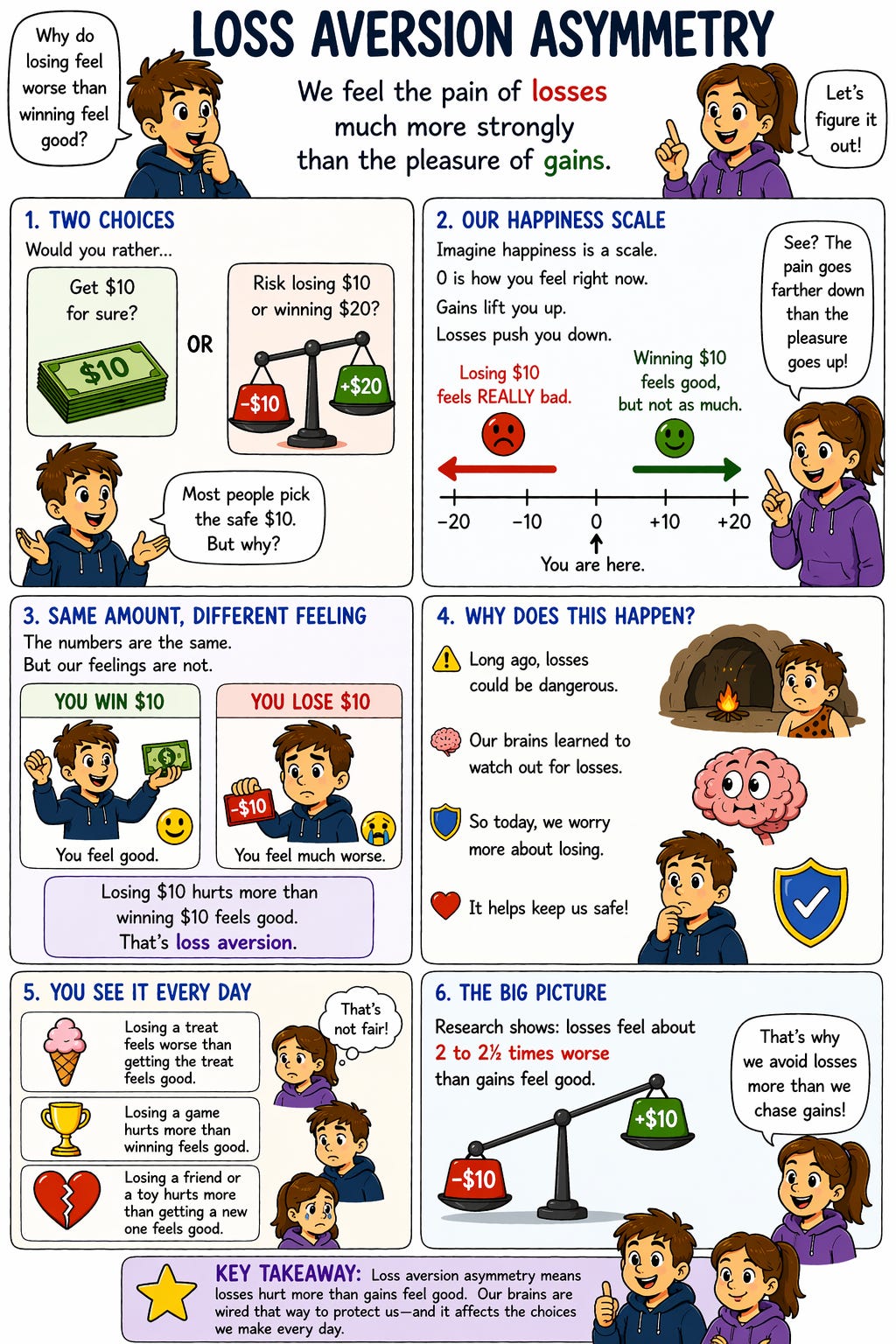

Some samples: “Loss Aversion asymmetry to an eleven-year-old”, serving as the baseline:

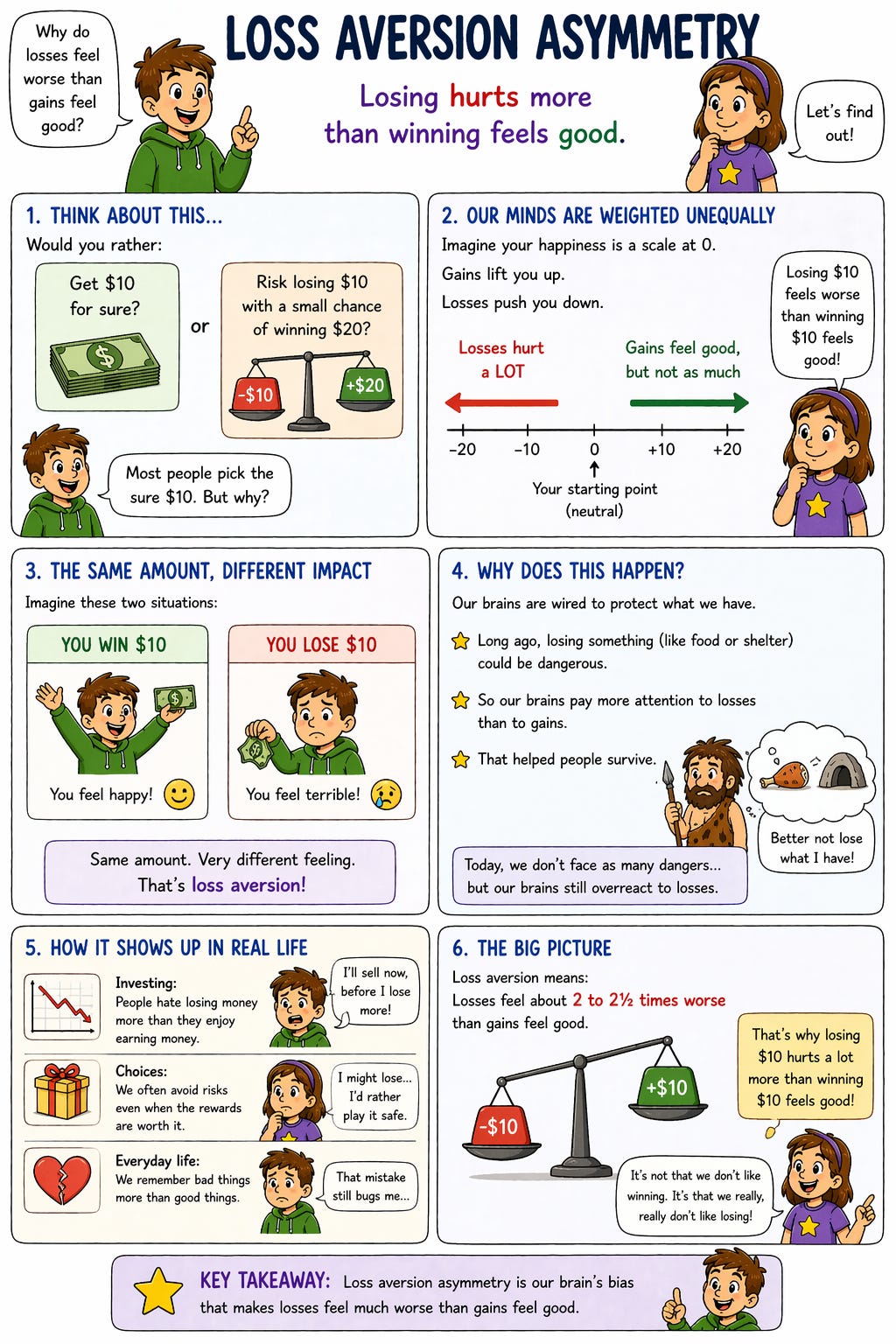

Now decreasing to “Loss aversion asymmetry to a ten-year-old”:

You can see some slight differences, largely in the language wording. But mostly comparable at this stage.

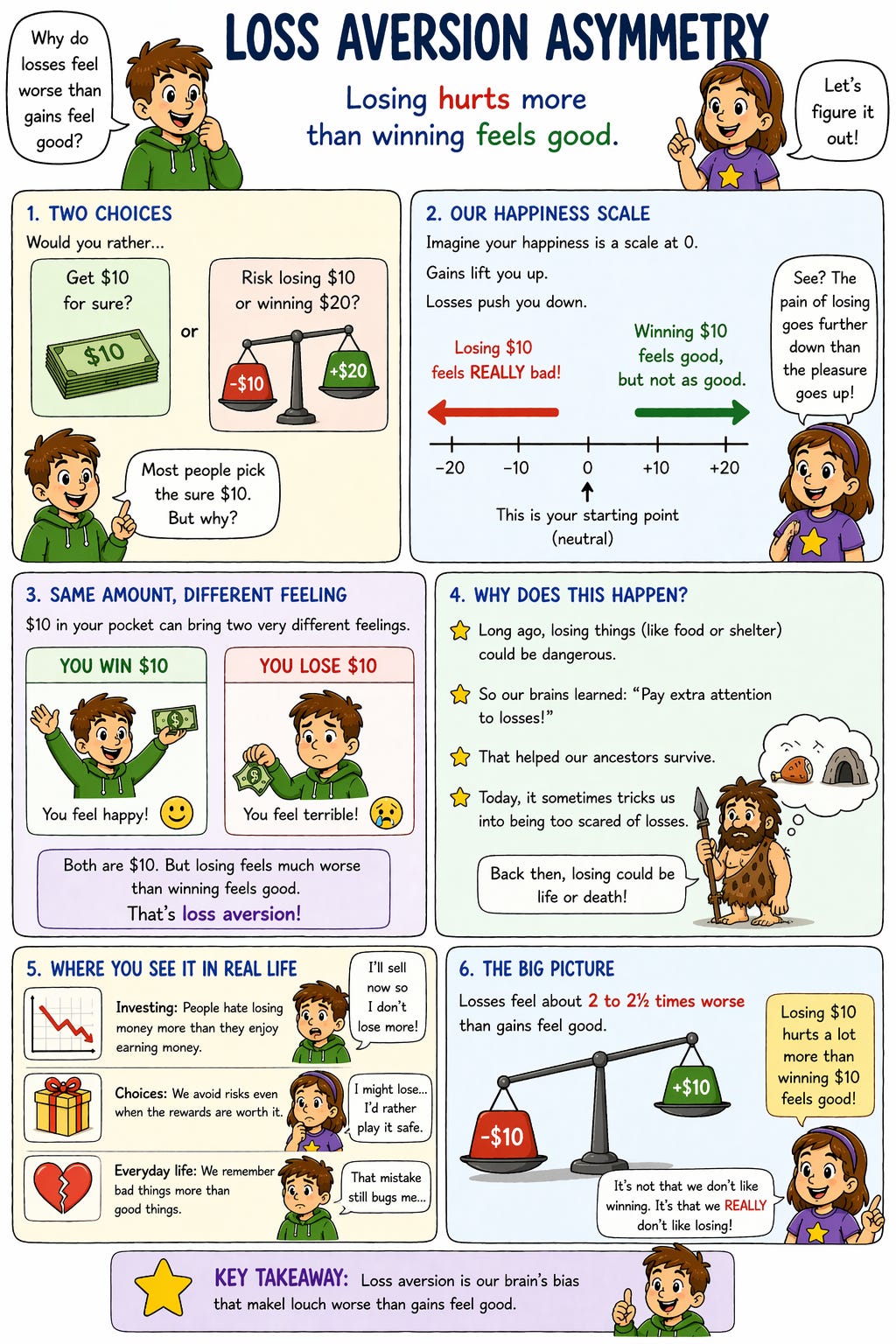

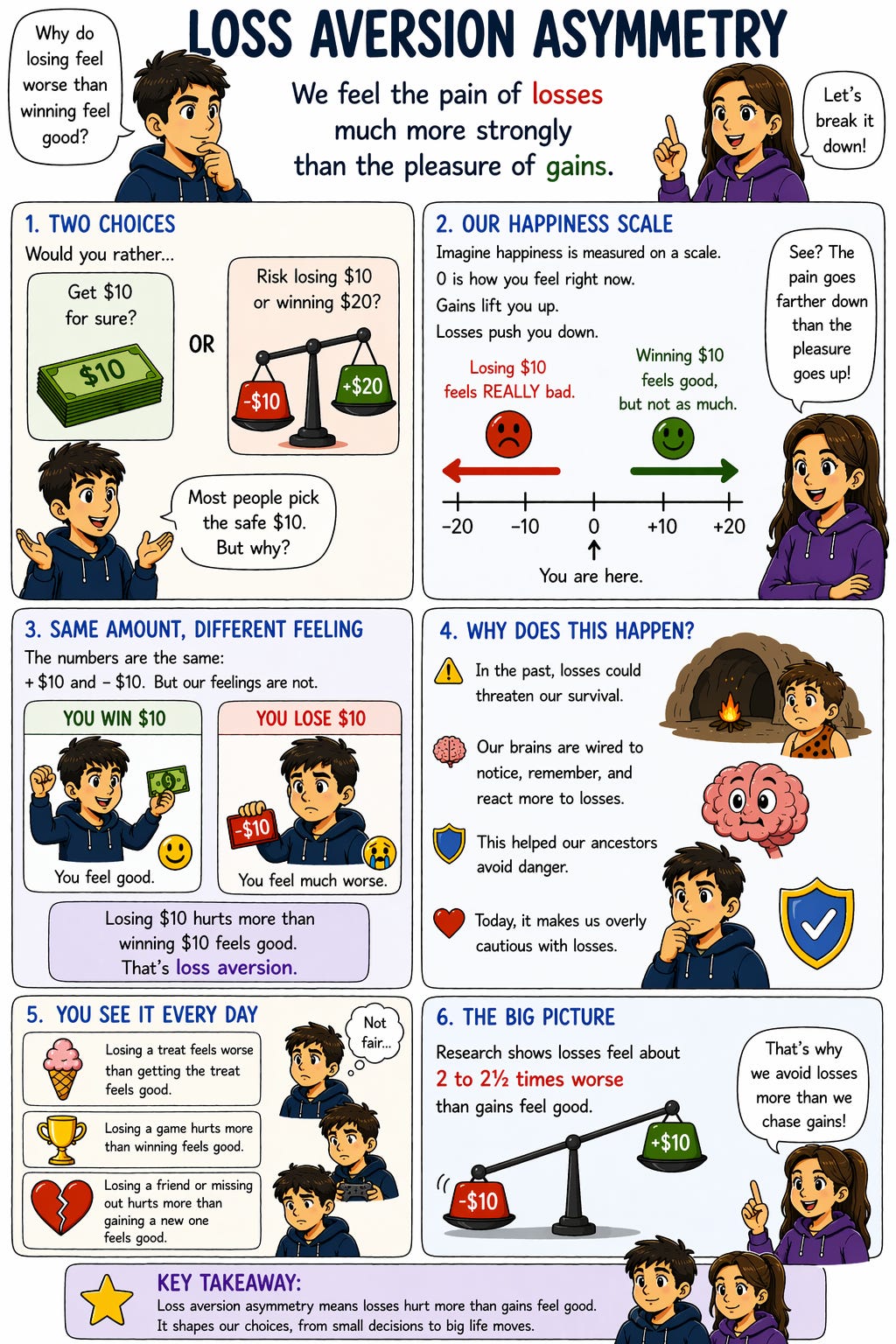

As we decrease that down gradually to “Loss aversion asymmetry to a five-year-old”, we see some gradual differences, which are more obvious when the output is compared directly:

Differences:

Simpler language, although the language stabilizes at about age 8;

More age-appropriate examples, from investing (ages 11 and 10) to ice cream (ages 9 down to 5); and

The visual explanatory elements change: as the languages simplifies, the visual elements also simplify, with the caveman becoming a caveboy, and the inclusion of a cartoon brain and green and red emojis on the happiness scale;

Younger presenters, although again this stabilizes at about age 8, as the presenters from 8 down to 5 appear identical.

This creates the context within the session. What carries forward when we age back up?

Considering “Loss aversion asymmetry to a thirteen-year-old”, we notice that the presenters are now older, and the language is more complex…

but everything else from the session context present in the five-year-old case carries forward:

The fourteen-year-old still loses the ice cream, and still uses the caveboy, the cartoon brain, and the simple red and green emojis.

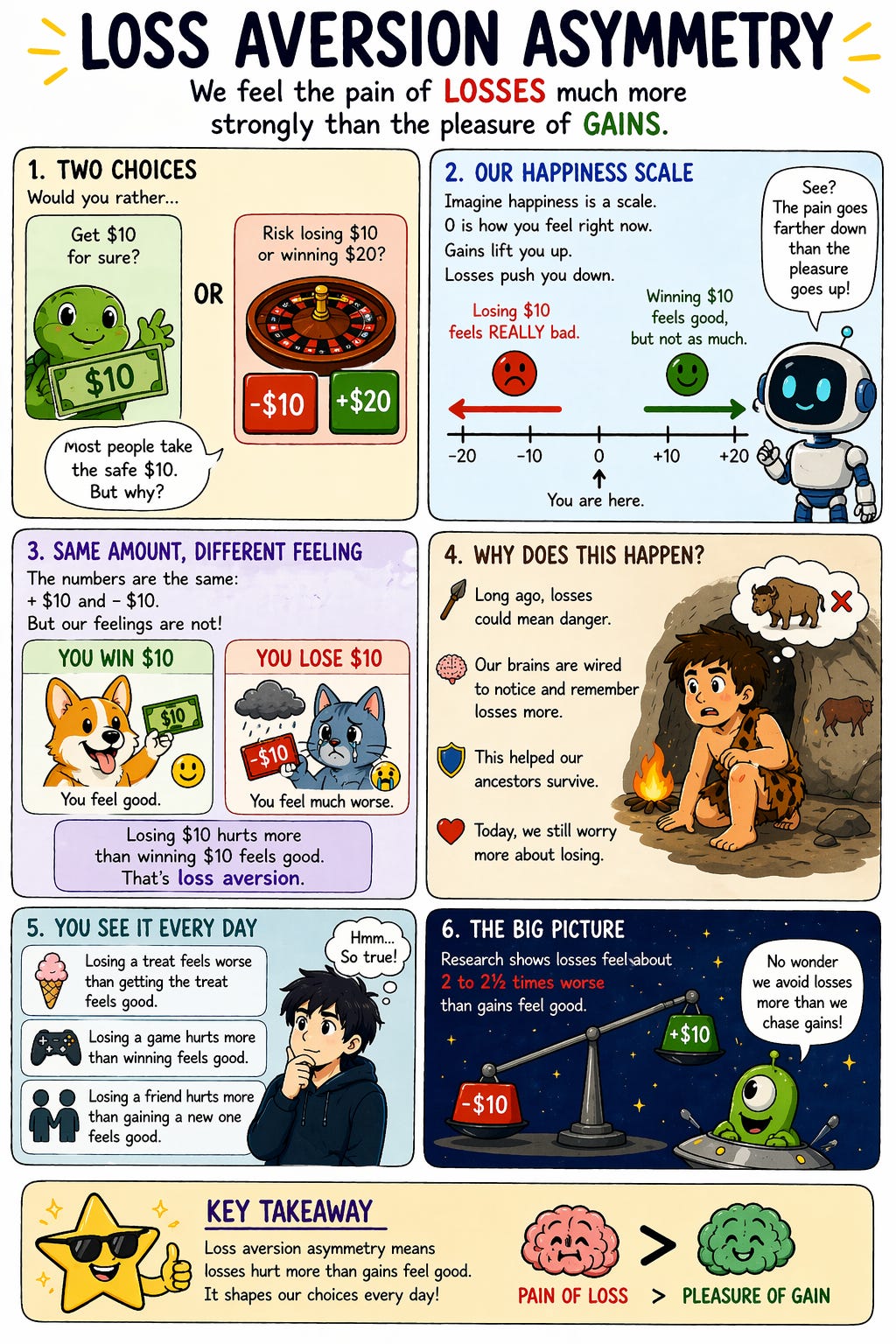

And these don’t revert or change as the age increases, as the context persistence dominates. Consider “Loss aversion asymmetry to an eighteen-year-old”:

Eighteen-year-old presenters, eighteen-year-old language, but five-year-old visual context.

ChatGPT’s explanation of this phenomenon

The following was ChatGPT’s explanation for why this was happening (after some strong denials that it was happening at all):

[…]

There’s still no “memory of characters” in the sense of identity tracking, but there is something more subtle happening: context drift + distribution narrowing across the conversation.

Here’s what likely happened:

1. Early Requests Had More Conceptual Diversity

At the start, you were asking for:

Different math paradoxes

Different conceptual structures

Slightly varied phrasing

That gave the image model a wider solution space, so it explored:

Different layouts

Different character types

Different visual metaphors

Result: more variety.

2. You Converged on a Very Specific Template

As the thread progressed, your requests became highly consistent in structure:

“cartoon infographic explaining [abstract concept] to a young person”

That’s effectively a strong latent template.

Once the model locks onto a template that works well, it tends to:

Reuse composition patterns

Reuse character archetypes

Reuse layout structure

This is called mode collapse (informally, in generative systems):

The model keeps sampling from the same “safe” region of outputs.

3. Conversation Context Does Influence Sampling

Even though the model doesn’t remember images explicitly, the textual context of the thread persists.

So later prompts are not interpreted in isolation—they’re influenced by:

Previous phrasing patterns

Repeated stylistic cues

Reinforced expectations (“this is an educational comic series”)

Effectively, the system starts to interpret your intent as:

“Keep making more panels in the same kind of educational comic.”

That nudges it toward consistency.

4. The Model Is Optimizing for Continuity Without Being Asked

There’s a subtle bias in generative systems:

When a user repeats a format, the model often infers:

“They probably want stylistic continuity.”

So even without explicit instruction, it leans toward:

Same narrator types

Same tone

Same visual grammar

This isn’t memory of characters—it’s pattern reinforcement.

5. Why It Feels Like a “Transition”

You experienced it as a shift because:

Early: exploration phase (high entropy outputs)

Later: exploitation phase (low entropy, stable template)

That creates the illusion of:

“The model decided on characters and stuck with them”

But what actually happened is:

“The model found a high-probability solution and kept reusing it”

6. Analogy (useful mental model)

Imagine asking 20 different illustrators:

First few prompts: vague → they all draw differently

Later prompts: “make it like the last one” (implicitly) → styles converge

Even without copying, they converge on a shared convention.

7. Key Distinction

❌ Not: “These are the same characters being remembered”

✅ Yes: “These are repeated samples from the same learned archetype under increasingly constrained conditions”

8. If You Want to Force Diversity Again

You have to increase entropy in the prompt:

Try things like:

“no human characters”

“each panel uses a different art style”

“randomized character types (robot, animal, alien, object)”

“avoid recurring characters entirely”

That breaks the convergence loop.

Bottom Line

What you saw wasn’t memory—it was emergent consistency caused by repeated constraints plus contextual reinforcement.

The system didn’t decide to reuse characters—it just kept landing in the same “best-fit” region of its probability landscape.

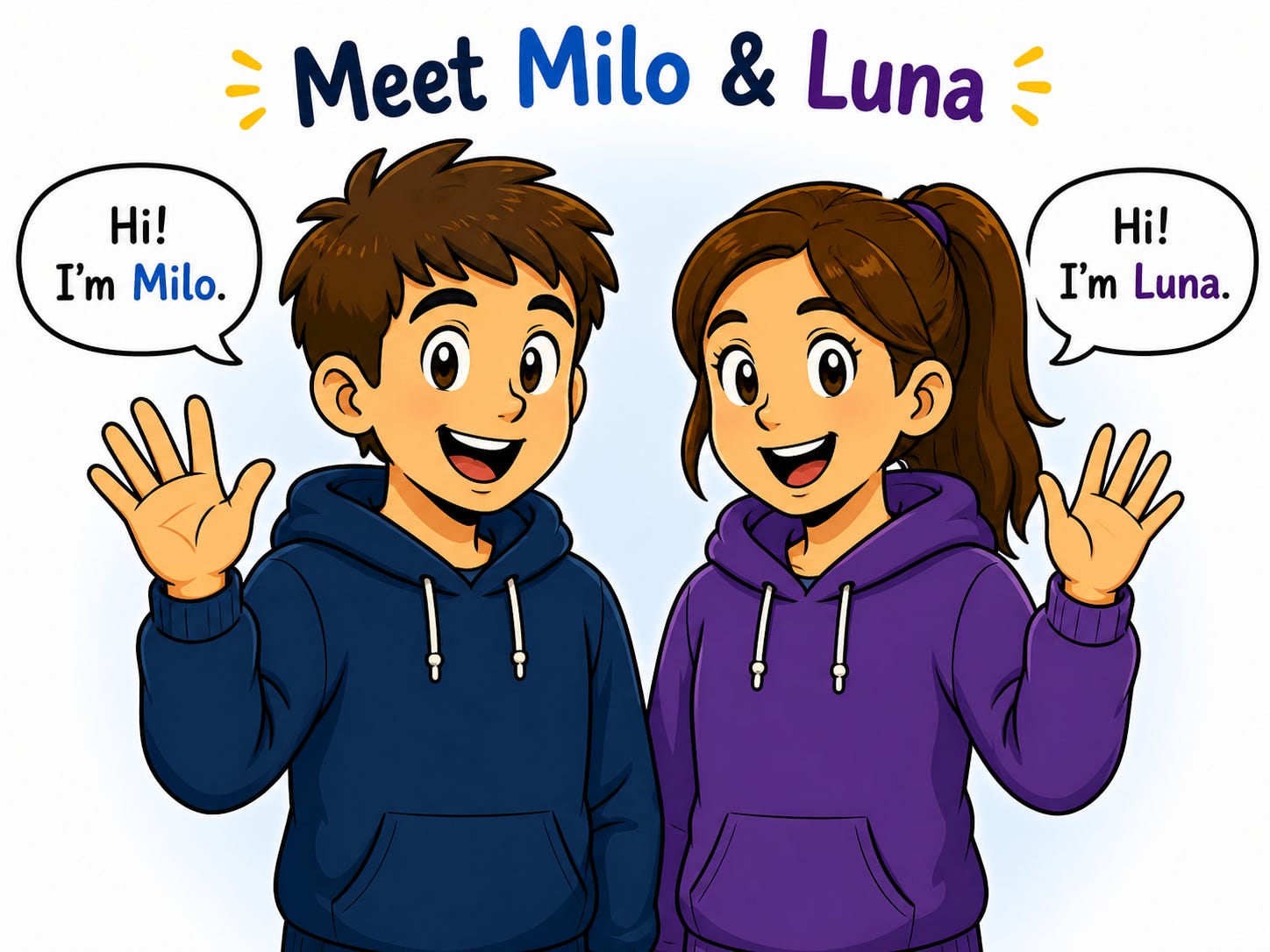

Interestingly, repeating the same “Loss aversion asymmetry to an eighteen-year-old” after that back-and-forth returned a different version with a much broader array of presenters.

Even so, the context was strong enough that it was able to generate the header image from this article given the prompt:

“Can you create a new image with just the two common presenters from the earlier images, introducing themselves as "Milo" and "Luna"?

Takeaway

When generating images within ChatGPT with Images 2.0, reset your session frequently when you want to enforce changes to the generated content.

This was true before Images 2.0, but with the step-up in the quality with 2.0, the impact of the context carrying over is also much more noticeable.